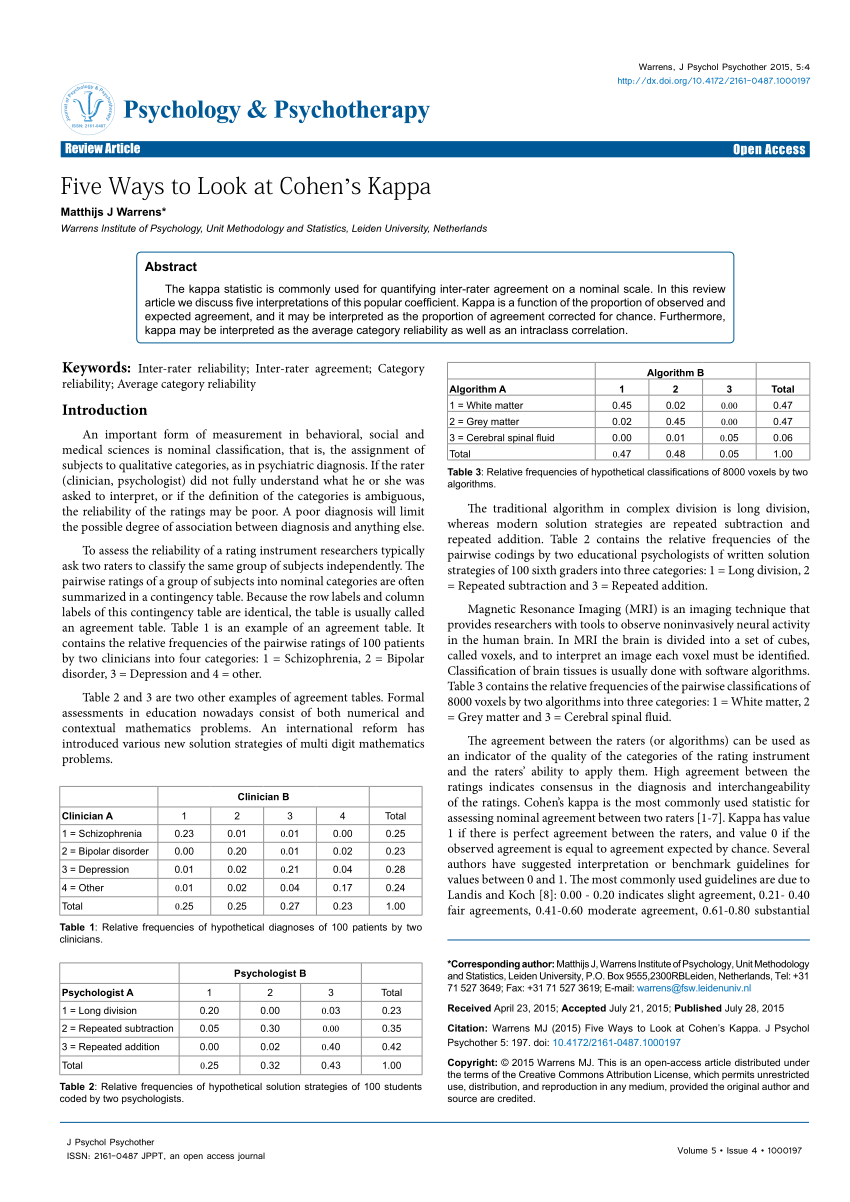

Agree or Disagree? A Demonstration of An Alternative Statistic to Cohen's Kappa for Measuring the Extent and Reliability of Ag

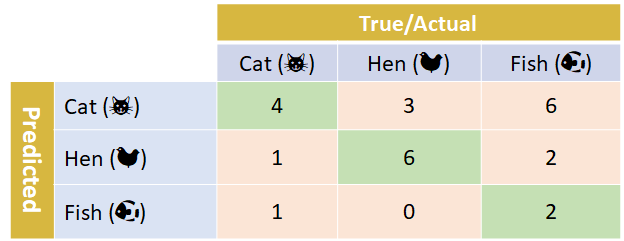

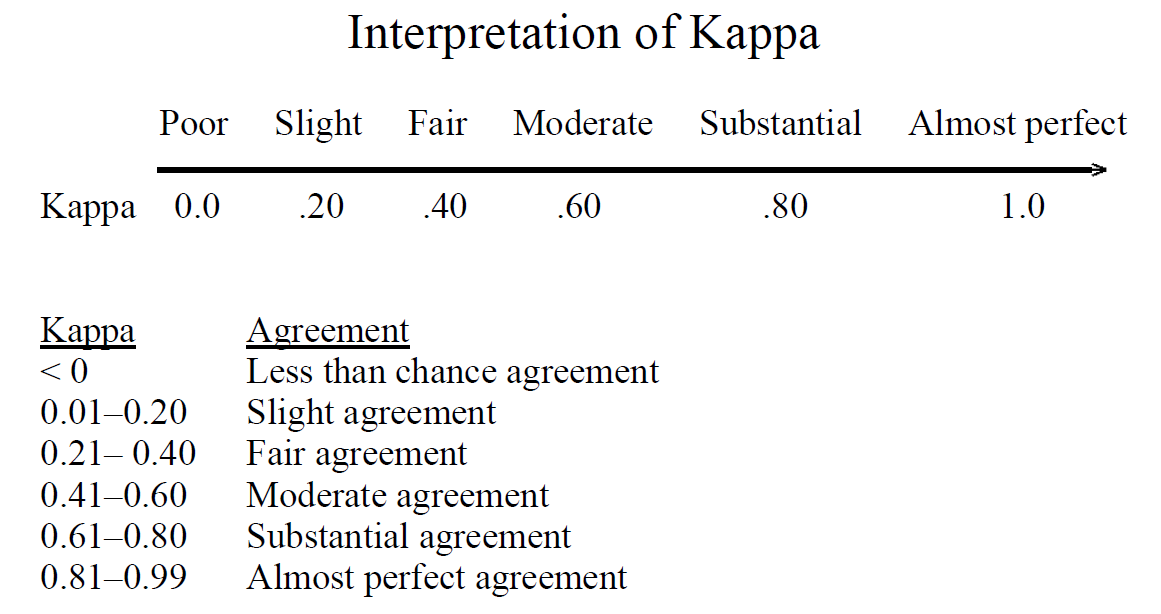

Multi-Class Metrics Made Simple, Part III: the Kappa Score (aka Cohen's Kappa Coefficient) | by Boaz Shmueli | Towards Data Science

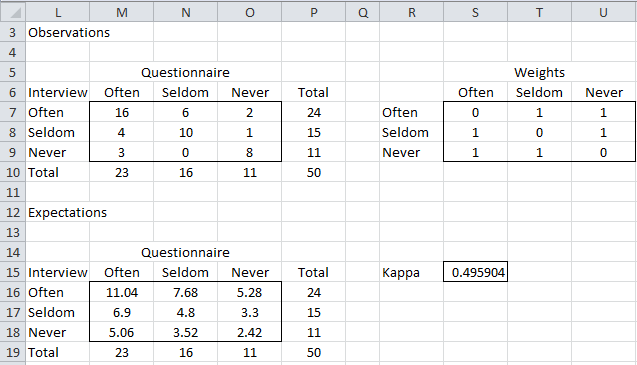

Understanding the calculation of the kappa statistic: A measure of inter-observer reliability | Semantic Scholar

A COMPARISON OF COHEN'S KAPPA AND GWET'S AC1 WITH A MASS SHOOTING CLASSIFICATION INDEX: A STUDY OF RATER UNCERTAINTY By ASHL

Inter-rater agreement Kappas. a.k.a. inter-rater reliability or… | by Amir Ziai | Towards Data Science

File:Comparison of rubrics for evaluating inter-rater kappa (and intra-class correlation) coefficients.png - Wikimedia Commons

Agree or Disagree? A Demonstration of An Alternative Statistic to Cohen's Kappa for Measuring the Extent and Reliability of Ag

An analysis of qualitative responses from a UK survey of the psychosocial wellbeing of people with skin conditions and their exp

![PDF] Large sample standard errors of kappa and weighted kappa. | Semantic Scholar PDF] Large sample standard errors of kappa and weighted kappa. | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/f2c9636d43a08e20f5383dbf3b208bd35a9377b0/4-Table2-1.png)

![PDF] Understanding interobserver agreement: the kappa statistic. | Semantic Scholar PDF] Understanding interobserver agreement: the kappa statistic. | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/e45dcfc0a65096bdc5b19d00e4243df089b19579/3-Table2-1.png)

![PDF] Understanding interobserver agreement: the kappa statistic. | Semantic Scholar PDF] Understanding interobserver agreement: the kappa statistic. | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/e45dcfc0a65096bdc5b19d00e4243df089b19579/3-Table3-1.png)