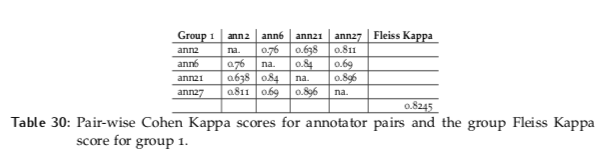

Inter-Annotator Agreement (IAA). Pair-wise Cohen kappa and group Fleiss'… | by Louis de Bruijn | Towards Data Science

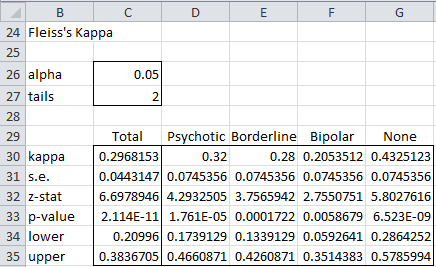

AgreeStat/360: computing weighted agreement coefficients (Conger's kappa, Fleiss' kappa, Gwet's AC1/AC2, Krippendorff's alpha, and more) for 3 raters or more

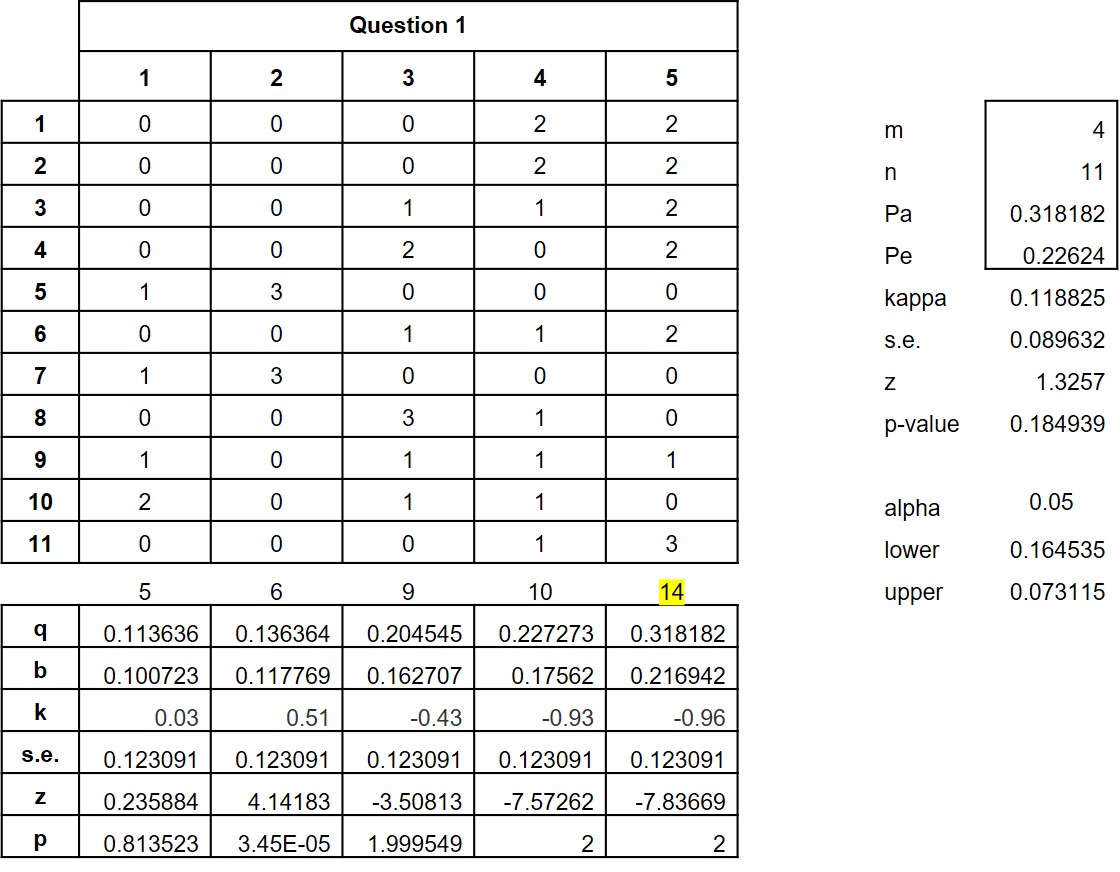

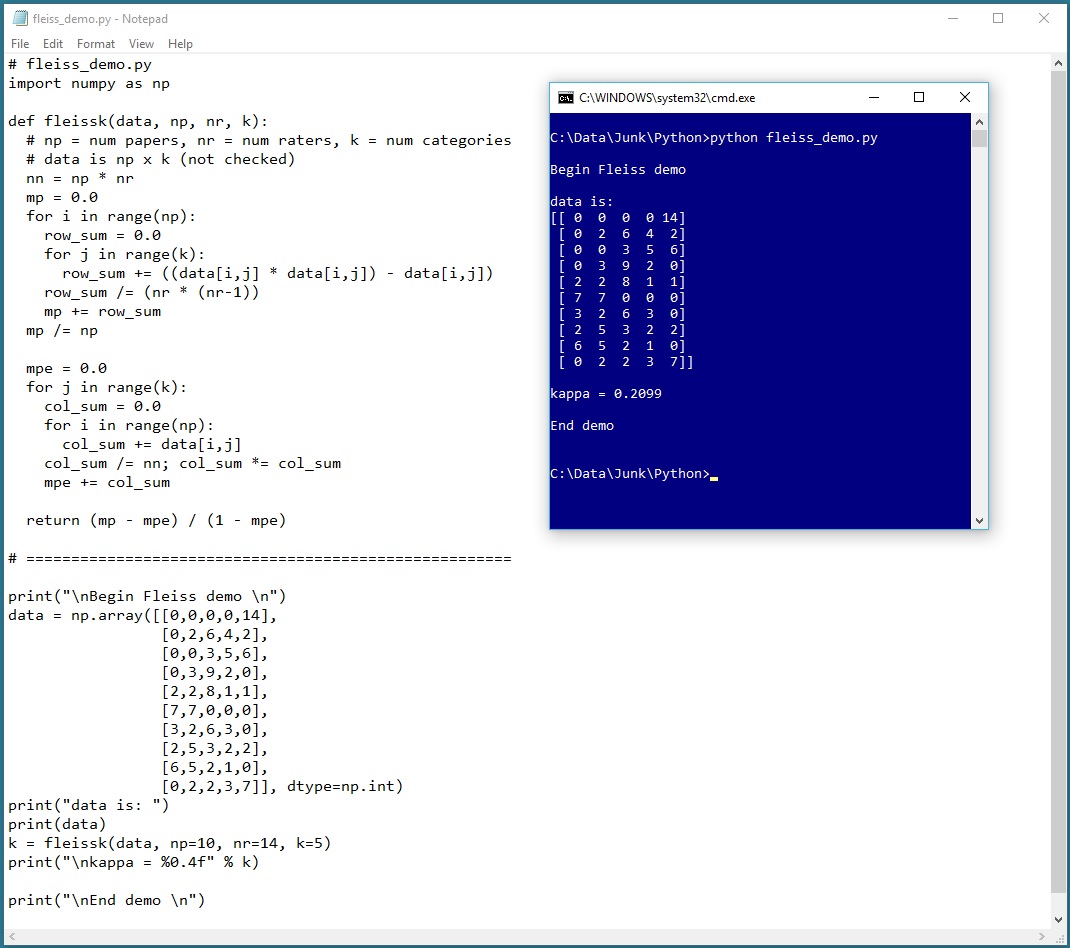

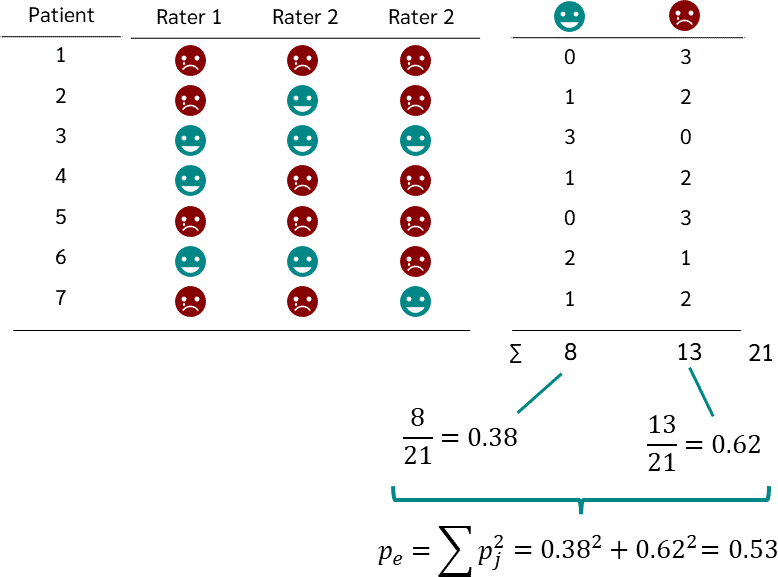

Fleiss' multirater kappa (1971), which is a chance-adjusted index of agreement for multirater categorization of nominal variab

![PDF] Fuzzy Fleiss-kappa for Comparison of Fuzzy Classifiers | Semantic Scholar PDF] Fuzzy Fleiss-kappa for Comparison of Fuzzy Classifiers | Semantic Scholar](https://d3i71xaburhd42.cloudfront.net/8aa54d5299fb48d6a7355c766573ecb520a43393/3-Table1-1.png)